Yıldız, Beytullah

Loading...

Profile URL

Name Variants

Yıldız, Beytullah

B.,Yildiz

Yildiz, B

B., Yildiz

B., Yıldız

Beytullah, Yildiz

Y.,Beytullah

Yildiz,B.

Y., Beytullah

Yıldız,B.

Beytullah, Yıldız

Yildiz, Beytullah

B.,Yıldız

B.,Yildiz

Yildiz, B

B., Yildiz

B., Yıldız

Beytullah, Yildiz

Y.,Beytullah

Yildiz,B.

Y., Beytullah

Yıldız,B.

Beytullah, Yıldız

Yildiz, Beytullah

B.,Yıldız

Job Title

Doçent Doktor

Email Address

beytullah.yildiz@atilim.edu.tr

Main Affiliation

Software Engineering

Status

Website

ORCID ID

Scopus Author ID

Turkish CoHE Profile ID

Google Scholar ID

WoS Researcher ID

Sustainable Development Goals

2

ZERO HUNGER

0

Research Products

11

SUSTAINABLE CITIES AND COMMUNITIES

0

Research Products

14

LIFE BELOW WATER

0

Research Products

6

CLEAN WATER AND SANITATION

0

Research Products

1

NO POVERTY

0

Research Products

5

GENDER EQUALITY

0

Research Products

9

INDUSTRY, INNOVATION AND INFRASTRUCTURE

0

Research Products

16

PEACE, JUSTICE AND STRONG INSTITUTIONS

0

Research Products

17

PARTNERSHIPS FOR THE GOALS

0

Research Products

15

LIFE ON LAND

0

Research Products

10

REDUCED INEQUALITIES

0

Research Products

7

AFFORDABLE AND CLEAN ENERGY

0

Research Products

8

DECENT WORK AND ECONOMIC GROWTH

0

Research Products

4

QUALITY EDUCATION

0

Research Products

12

RESPONSIBLE CONSUMPTION AND PRODUCTION

0

Research Products

3

GOOD HEALTH AND WELL-BEING

2

Research Products

13

CLIMATE ACTION

0

Research Products

Documents

15

Citations

166

h-index

8

Documents

15

Citations

85

Scholarly Output

18

Articles

7

Views / Downloads

92/711

Supervised MSc Theses

6

Supervised PhD Theses

0

WoS Citation Count

60

Scopus Citation Count

136

WoS h-index

5

Scopus h-index

6

Patents

0

Projects

0

WoS Citations per Publication

3.33

Scopus Citations per Publication

7.56

Open Access Source

2

Supervised Theses

6

Google Analytics Visitor Traffic

| Journal | Count |

|---|---|

| Concurrency and Computation: Practice and Experience | 4 |

| IEEE Access | 1 |

| International Conference on Computational Science and Computational Intelligence (CSCI) -- DEC 13-15, 2023 -- Las Vegas, NV | 1 |

| International Journal on Artificial Intelligence Tools | 1 |

| Lecture Notes in Networks and Systems -- International Conference on Computing, Intelligence and Data Analytics, ICCIDA 2022 -- 16 September 2022 through 17 September 2022 -- Kocaeli -- 291929 | 1 |

Current Page: 1 / 2

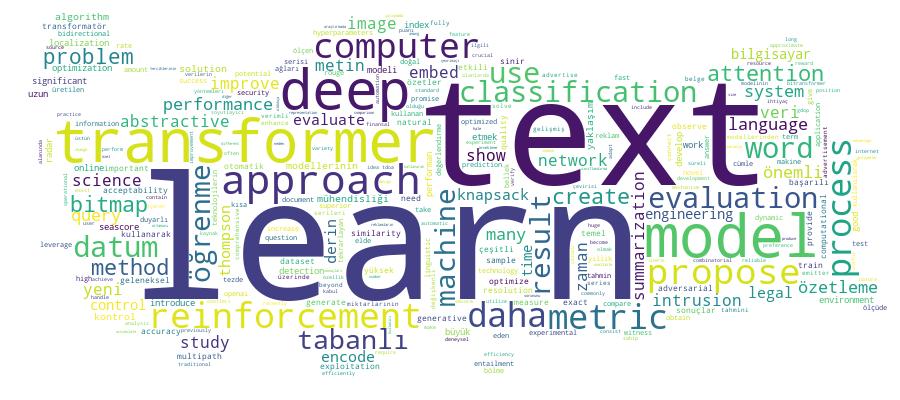

Competency Cloud