Erol, Berkay

Loading...

Profile URL

Name Variants

E.,Berkay

Erol,B.

B., Erol

Berkay, Erol

E., Berkay

B.,Erol

Erol, Berkay

Erol,B.

B., Erol

Berkay, Erol

E., Berkay

B.,Erol

Erol, Berkay

Job Title

Araştırma Görevlisi

Email Address

berkay.erol@atilim.edu.tr

Main Affiliation

English Translation and Interpretation

Status

Website

ORCID ID

Scopus Author ID

Turkish CoHE Profile ID

Google Scholar ID

WoS Researcher ID

Sustainable Development Goals

SDG data is not available

This researcher does not have a Scopus ID.

This researcher does not have a WoS ID.

Scholarly Output

1

Articles

0

Views / Downloads

1/0

Supervised MSc Theses

0

Supervised PhD Theses

0

WoS Citation Count

0

Scopus Citation Count

2

WoS h-index

0

Scopus h-index

1

Patents

0

Projects

0

WoS Citations per Publication

0.00

Scopus Citations per Publication

2.00

Open Access Source

1

Supervised Theses

0

Google Analytics Visitor Traffic

| Journal | Count |

|---|---|

| Lecture Notes in Computer Science (including subseries Lecture Notes in Artificial Intelligence and Lecture Notes in Bioinformatics) -- 16th International Conference on Human-Computer Interaction: Applications and Services, HCI International 2014 -- 22 June 2014 through 27 June 2014 -- Heraklion, Crete -- 105920 | 1 |

Current Page: 1 / 1

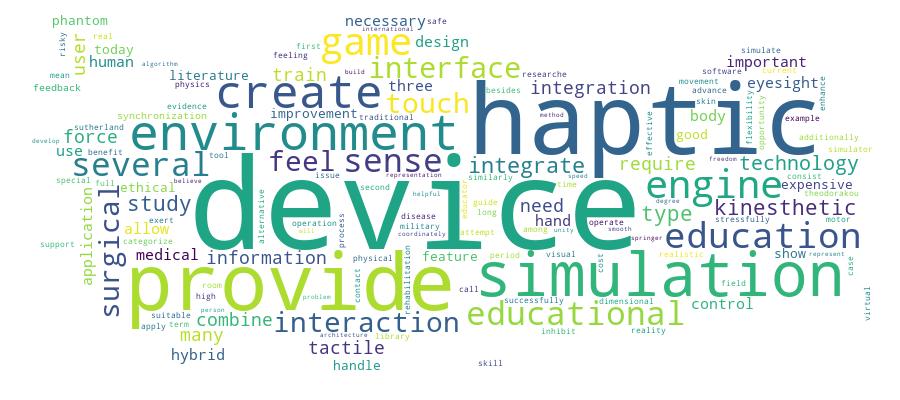

Competency Cloud